Beyond LLMs: Why SupportLogic AutoQA uses a precision Multi-Model ML Stack

Why scoring 13 nuanced agent behaviors across voice, text, and time requires far more than asking an LLM to “evaluate this ticket.”

Machine Learning Engineering · AutoQA Deep Dive

In the era of large language models, a natural instinct emerges: if you need to evaluate quality in customer support conversations, why not just pipe the transcript into an LLM and ask it to judge? The question seems almost too obvious to pose. Yet SupportLogic‘s AutoQA system — the engine that scores agent behaviors across tens of thousands of support cases — is not built that way. Not because the team lacked access to frontier models, but because the problem demands something more precise, more defensible, and more architecturally intentional.

This post unpacks the full AutoQA ML stack: what each model does, why it was chosen for that specific job, and where LLMs do and don’t fit into the picture.

The Problem Space: Why AutoQA Is Hard

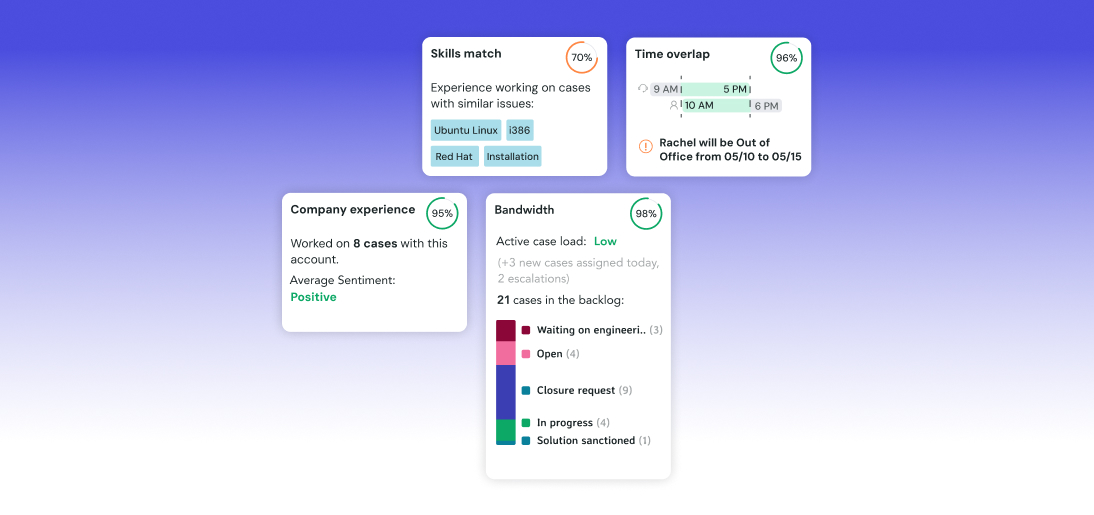

AutoQA evaluates 13 distinct agent behaviors — things like proper greetings, empathy, probing questions, successful resolution, SLA adherence, and spelling/grammar. Each behavior operates at a different granularity, on different signal modalities, and has different tolerance for false positives.

Consider what it actually takes to answer the deceptively simple question: “Did the agent demonstrate empathy?” You need to parse the sentiment arc of the conversation, identify which speaker said what (speaker diarization), understand the customer’s escalating frustration in context, and then judge a specific comment or the whole ticket. Each of those sub-problems is a meaningful ML task. Stack 13 such behaviors together and you have a system that must be fast, deterministic, auditable, and accurate at scale.

Why not just use a single LLM for everything?

LLMs generate fluent text, but they calculate poorly, hallucinate trends when context is sparse, vary outputs non-deterministically, and are expensive to run at the volume required. For behaviors involving timing (SLA adherence), audio emotion (acoustics), or precise pass/fail scoring used in agent reviews and QBRs, LLM outputs aren’t reliable enough on their own — and the cost-per-case math doesn’t work. More on this later.

The Multi-Model Pipeline Architecture

AutoQA’s architecture follows a strict division of labor: specialized models own classification and measurement; LLMs own explanation and narrative. Scoring is deterministic Python. Nothing crosses those lines.

┌──────────────────────────────────────────────────┐

│ INPUT: Voice file + Transcript + Case Metadata │

└─────────────────────────▼────────────────────────┘

┌──────────────────▼───────────────────┐

│ LAYER 1: Pre-processing │

│ • Speaker Diarization │

│ • Sentiment Detection (BERT-based) │

│ • Acoustics Tonality (TorchServe) │

└──────────────────────────────────────┘

┌──────────────────▼───────────────────┐

│ LAYER 2: Behavior Classification │

│ • RoBERTa-Base (text behaviors) │

│ • SpaCy rules (pattern behaviors) │

│ • Python timing scripts (SLA) │

│ • GenAI classifiers (complex cases) │

└──────────────────────────────────────┘

┌──────────────────▼───────────────────┐

│ LAYER 3: Deterministic Scoring │

│ • Weighted score (0–100) in Python │

│ • Grade (A–F) computed, not inferred│

└──────────────────────────────────────┘

┌──────────────────▼───────────────────┐

│ LAYER 4: Generative Synthesis (LLM) │

│ • Quality Agent narrative │

│ • Coaching Agent narrative │

│ • Generated in a single LLM pass │

└──────────────────────────────────────┘

Each layer is independently upgradeable. The scoring layer never touches a neural network. The LLM layer never touches the scoring math. This modularity is by design — and the reason the system can meet strict cost targets while still producing rich, human-readable outputs.

Model by Model: What Each Component Does

BERT-family Sentiment Detection: The Speaker Diarization Layer

Before any behavior can be scored, the system needs to know who said what and how they felt when they said it. The sentiment detection model operates on raw transcript data — not just the full conversation, but individual speaker turns.

The evaluation philosophy here is instructive: the team explicitly optimizes for precision over recall, because false positives carry higher cost than false negatives in this context. A false positive — incorrectly flagging a positive interaction as negative — poisons agent scores and erodes trust in the system. The model achieves roughly 63% precision on transcript data and 75% precision on structured signal data, with known limitations around borderline cases. SupportLogic’s Sentiment Agent applies three levels of language analysis — fine-grained analysis, emotion detection, and aspect-based sentiment analysis — to surface the true voice of the customer.

An important nuance: the team found that the “gold standard” negative signal labels in the evaluation data were themselves imperfect — manual review revealed false positives within the ground truth labels. This is the kind of subtle data quality problem that a pure LLM-as-judge approach would simply inherit, compounding the error. The specialized model’s behavior can be audited; an LLM’s reasoning is harder to trace.

TorchServe / Vertex AI Acoustics Tonality: The Voice Emotion Model

Text alone doesn’t tell the whole story. A customer who types “fine, thanks” can say it with icy fury. The Acoustics Tonality model addresses this by analyzing actual audio files for signs of agent anger — not customer sentiment, but agent tone, which is a direct quality signal. This capability is surfaced to end users through SupportLogic’s Voice Agent, which monitors 100% of voice calls for quality and passes them through a customizable support quality rubric.

The engineering choices here reveal the depth of the problem. The model operates on 3-second audio chunks extracted only from agent speaking intervals (identified via the transcript). It classifies seven emotional categories — angry, disgust, fearful, happy, neutral, sad, surprised — but only uses “angry” as a negative signal, for a carefully considered reason: thick accents are disproportionately classified as “disgust” by the model. Using disgust as a negative category would systematically penalize agents with non-native accents. This is an AI fairness decision embedded in the model design.

Why “fearful” and “sad” are also excluded

The model cannot reliably distinguish between genuinely fearful speech and low-amplitude voices. “Sad” classifications break down with accent variation. These exclusions aren’t model failures — they’re explicit, documented engineering decisions to prevent systematic bias. An LLM wrapper applied to audio transcripts would have no mechanism to detect or correct for this at all.

The model is deployed on a dedicated Vertex AI endpoint for larger payloads and longer timeouts — not the standard shared endpoint infrastructure. A confidence threshold gates the final “angry” classification; below the threshold, the system returns NEGATIVE (no anger detected) rather than making a low-confidence positive claim. The output includes timestamped 3-second windows of detected segments, enabling reviewers to jump directly to flagged moments.

RoBERTa-Base Core Behavior Classification

The workhorse of AutoQA’s text scoring is RoBERTa-Base, fine-tuned for binary behavior classification across SupportLogic’s 13 QA behaviors. RoBERTa excels at the yes/no questions this system needs to answer at scale: “Did the agent acknowledge the issue?” “Did the agent offer additional help?” These are discriminative tasks — the model doesn’t need to generate anything, just classify with high confidence.

The scan level matters here. Some behaviors are evaluated at the comment level (each individual agent message is scored), while others are evaluated at the ticket level (the entire conversation is the unit of analysis). “Successful Resolution” is a ticket-level behavior. “Empathy” might be detected in a single comment. The model architecture and prompt context are adapted to match the scan level — this is not a one-size-fits-all inference call.

SpaCy Rule-Based Pattern Detection

Not every behavior needs a neural network. Some are better captured by precise linguistic patterns — and for those, SpaCy rules outperform model-based approaches in reliability, speed, and interpretability. Greeting detection, for instance, is well-served by a deterministic pattern matcher that looks for known opening phrases. The rule fires or it doesn’t; there’s no probability to threshold.

This isn’t a legacy compromise — it’s the right tool. Introducing model uncertainty where certainty is achievable adds noise to the downstream scoring. SpaCy rules are also trivially auditable: a QA team lead can read the rule, understand why it fired, and update it without retraining anything.

Python Scripts Timing and SLA Measurement

SLA adherence is purely computational. The first response time is a timestamp difference. Whether the agent responded within the SLA window is a comparison. No machine learning is involved or appropriate — and adding it would introduce unnecessary stochasticity into a calculation that should always return the same answer for the same input.

The system’s principle here is explicit: “We do not let the LLM do the math.” LLMs are unreliable arithmeticians, particularly for weighted average calculations under structured constraints. Numeric grades flow from Python; LLMs receive those grades as facts to explain, not questions to compute.

LLM (GPT-4.1 mini) Reasoning, Narrative, and Coaching

Once classification and scoring are complete, the LLM enters — not as an oracle, but as a narrator. The Quality Agent and Coaching Agent outputs are generated in a single LLM call from a fully assembled context bundle: case conversation, behavior analysis results, calculated score and grade, agent trend history, and customer CES trend.

The single-pass architecture is a deliberate cost and consistency decision. Sending the large context payload twice (once for Quality, once for Coaching) would roughly double input token costs across thousands of cases and introduce the risk of inconsistency — where the Quality summary says “Resolution: Good” but the Coaching summary says “You need to work on resolution.” Generating both in one window eliminates that possibility. Model and prompt selection are controlled independently via LaunchDarkly feature flags, enabling the team to swap either without a code deployment.

The LLM Experiments: How the Team Chose GPT-4.1 Mini

The path to the production LLM configuration was not a single benchmark lookup — it was a two-phase structured experiment with 100 samples in Phase 1 and 50 samples in Phase 2, using LLM-as-Judge metrics from Opik (Answer Relevance, Hallucination, Moderation) plus qualitative review.

Phase 1: Baseline on Three Models

Phase 1 tested Gemini 3 Flash Preview, GPT-5.2, and Gemini 2.5 Flash against 100 samples built from real Cvent and DeepInstinct case data. Gemini 2.5 Flash scored best on all three Opik metrics — but the team correctly identified that metrics alone were not telling the complete story.

| Metric | Gemini 3 Flash Preview | GPT-5.2 | Gemini 2.5 Flash |

|---|---|---|---|

| Answer Relevance ↑ | 0.8924 | 0.9070 | 0.9767 |

| Hallucination ↓ | 0.2636 | 0.1879 | 0.0010 |

| Moderation ↓ | 0.0225 | 0.0100 | 0.0000 |

Despite Gemini 2.5 Flash winning on metrics, qualitative review exposed four failure modes that made the outputs unusable in production:

- Generic score reasoning — outputs that restated the score without referencing actual behavior results

- Inconsistent persona voice — mixing “You demonstrated…” and “The agent demonstrated…” within the same response

- Hallucinated trends — claiming “grammar is a recurring issue” when

agent_context.trendswas empty - Unsupported KB recommendations — suggesting articles that had no basis in the case metadata

Phase 2: Prompt Improvement and Model Re-Selection

Phase 2 addressed Phase 1’s failure modes through two parallel changes: context-level fixes (trend fields always present or explicitly null) and meta-prompting (using GPT-5.2 and Gemini 3.1 Pro to rewrite the prompt, producing two candidate prompts v1 and v2). All six combinations (2 prompts × 3 models) were evaluated against 50 Cvent samples with GPT-5.2 Pro as judge.

| Combination | Answer Relevance ↑ | Hallucination ↓ | Moderation ↓ |

|---|---|---|---|

| v2 / GPT-4.1 mini ✓ | 0.8798 | 0.1244 | 0.0040 |

| v2 / Gemini 2.5 Flash | 0.8982 | 0.1524 | 0.0370 |

| v2 / Gemini 3 Flash | 0.8924 | 0.1662 | 0.0380 |

| v1 / GPT-4.1 mini | 0.8782 | 0.1330 | 0.0040 |

| v1 / Gemini 2.5 Flash | 0.8964 | 0.1804 | 0.0320 |

| v1 / Gemini 3 Flash | 0.8958 | 0.1732 | 0.0350 |

GPT-4.1 mini with prompt v2 achieved the lowest hallucination and moderation scores of any combination while maintaining competitive answer relevance. Critically, its cost profile fit production volume requirements — a factor that Gemini 2.5 Flash’s marginally better relevance score couldn’t overcome.

A Separate Experiment: LLMs for Behavior Classification Itself

In parallel, the team explored a different question: can an LLM replace the fine-tuned BERT classifiers altogether? The “Classification + Reasoning” experiment tested Gemini 2.5 Flash Lite, Flash, and Pro on the “Successful Resolution” behavior against a Red Hat Bugzilla-style case where the correct classification was Negative.

The revealing result

Gemini Flash Lite and Gemini Pro both correctly classified the case as Negative. Gemini Flash misclassified it as Positive in all runs across all thinking token configurations — yet still produced coherent reasoning about why the case was positive. This is the hallmark of confabulation: confident, articulate, wrong.

The experiment’s conclusion is nuanced: reasoning reliability was higher than classification reliability across the board. Even when a model misclassified, its reasoning referenced the correct cues. This led to a targeted LLM use case — generating reasoning statements to explain pre-computed BERT classifications, not replace them.

| Model | Classification | Avg Latency | Suitability |

|---|---|---|---|

| Flash Lite (0 tokens) | ✓ Correct (Negative) | ~1.77s | Real-time / batch |

| Flash (all configs) | ✗ Misclassified (Positive) | 1.3–8.1s | Quality explanations |

| Pro (auto tokens) | ✓ Correct (Negative) | 13.2s | QA review tools only |

| Pro (512 tokens) | ✓ Correct (Negative) | 5.04s | Most balanced output |

Why This Cannot Be a GenAI Wrapper — A Technical Reckoning

Let’s be direct about what a “GenAI wrapper” would look like here: send the transcript and some metadata to an LLM, ask it to score the 13 behaviors, return a JSON object. It’s tempting. It’s also wrong for this problem. Here’s why, behavior by behavior:

1. Audio Does Not Exist for an LLM

The Acoustics Tonality model operates on raw audio waveforms — 3-second chunks of agent speech, analyzed frame-by-frame for emotional features. A transcript can say “Sure, let me look into that” without conveying the seething frustration in the agent’s voice. An LLM receiving text has zero signal from audio. You cannot infer acoustic anger from words. The modality gap is absolute.

2. Timing Is a Calculation, Not an Inference

SLA adherence requires a timestamp difference and a threshold comparison. An LLM that “reasons” about whether a 28-minute response time violates a 15-minute SLA is doing arithmetic in natural language — unreliably. The ground truth is in the data; compute it.

3. Hallucinated Trends Are Worse Than No Trends

One of Phase 1’s most damaging failure modes was the LLM hallucinating agent trends — stating “grammar is a recurring issue” when the agent context trends were empty. In an agent coaching context, a fabricated trend is not just useless; it’s harmful. An agent might receive coaching on a pattern that doesn’t exist. The fix required a data layer change (always populating or explicitly nullifying trend fields) and a prompt constraint. A naive wrapper would have no mechanism to prevent this.

4. Non-Determinism Breaks Audit Trails

Agent QA scores are used in performance reviews, QBRs, and escalation decisions. They must be reproducible and auditable. An LLM producing slightly different scores on each run — because of sampling temperature, context window differences, or prompt sensitivity — cannot underpin a process with real organizational consequences. The system’s scoring is deterministic Python; the LLM only explains. If the explanation changes, the score doesn’t.

5. False Positive Costs Are Asymmetric

The sentiment detection evaluation explicitly prioritizes precision because false positives are costly. An LLM without this explicit calibration defaults to its own sense of what “negative sentiment” means — which may not match the product’s threshold or the customer’s definition. Specialized models can be threshold-tuned per business context. LLMs require significant prompt engineering to approach similar control, and even then the behavior is harder to verify at scale.

6. Cost Does Not Scale

The system operates at a hard constraint of under $1 per case. Running a large-context LLM call for each of 13 behaviors per case, per the volume of cases SupportLogic processes, would blow through that budget. The single-pass architecture for the generative layer (one call producing both Quality and Coaching outputs) was specifically chosen to stay inside the cost envelope. Replacing the entire stack with LLM calls would require either a severe quality downgrade to cheap models or cost structures incompatible with the product. SupportLogic’s guide to QA tools discusses how AutoQA’s modular pricing model avoids this trap by charging based on what you use.

Where LLMs Do Belong in AutoQA

None of this is an argument against LLMs. It’s an argument for appropriate tool selection. In the AutoQA stack, LLMs do the things they’re genuinely suited for:

- Narrative generation — explaining why a score is what it is in human-readable prose, grounded in the behavior results they receive as structured input

- Coaching synthesis — producing forward-looking, mentor-toned development guidance that references the agent’s historical trends and the specific behaviors that drove the score, surfaced through the Coaching Agent

- Reasoning enrichment — generating short, contextual reasoning statements for each classified behavior (the AutoQA Reasoning experiment), adding transparency without touching the classification itself

- Prompt improvement — the meta-prompting technique used in Phase 2, where frontier models were asked to rewrite prompts based on failure cases, is a legitimate high-leverage LLM use case

The LLM’s job is to synthesize, narrate, and explain — on top of a foundation that specialized models have already built. This is the correct division of cognitive labor. SupportLogic’s AI platform overview describes the full agent ecosystem this stack powers.

Engineering Lessons Worth Generalizing

Separation of Concerns Across Model Types

Different model types have different reliability profiles. Rule-based systems are perfectly reliable at the patterns they encode. Discriminative models are stable and auditable. Generative models are flexible but stochastic. Know which profile your use case requires before picking a model class.

Ground Truth Is Messy — Evaluate Your Evaluations

The discovery that the signal labels themselves contained false positives — meaning the “gold standard” was impure — is a critical lesson. Evaluation data quality is a model quality problem. Treating evaluation sets as ground truth without audit is a common and costly error.

Metrics Alone Don’t Surface Failure Modes

Phase 1’s Gemini 2.5 Flash scored best on all three Opik metrics yet failed qualitative review on hallucinated trends, voice inconsistency, and unsupported recommendations. Automated metrics are necessary but not sufficient. Build qualitative review into your evaluation process, not as an afterthought but as a gate.

Context Completeness Is a Data Engineering Problem

Several Phase 1 failure modes traced back to incomplete context — specifically, empty agent trend fields that the LLM filled with fabrications. The fix wasn’t a better prompt; it was ensuring the data layer always provided complete or explicitly null context. Prompt engineering cannot compensate for missing data.

Cost Is an Architectural Constraint, Not an Afterthought

The single-pass Quality + Coaching architecture, the choice of GPT-4.1 mini over larger models, the threshold-gated acoustic classifier — all of these decisions have cost as a design input. At scale, per-inference cost compounds. Building for cost-efficiency from day one shapes better architectures than retrofitting it later.

The CCaaS Wars Just Got Serious — And QA Is the Weapon Nobody Talks About

On March 10, 2026, at Enterprise Connect in Las Vegas, Salesforce crossed a line it drew in 2023. The company that once explicitly said it did not want to become a contact center provider launched Agentforce Contact Center — a fully native, agentic CCaaS offering that unifies voice, digital channels, CRM data, and AI agents in a single system. Kishan Chetan, EVP and GM of Agentforce Service, described it as an “iPhone moment.” The industry is still processing what that means.

What changed Salesforce’s mind?

Chetan’s explanation was disarmingly direct: “As we bring AI agents and humans together, we realize that having all of the data in one place, making sure that the contexts are fully passed together, becomes really important.” This is an architectural argument, not a competitive one. When voice data lives in Genesys and customer history lives in Salesforce, there is a seam between them. At that seam, AI context degrades — the agent on the phone doesn’t know what the customer bought last month. Salesforce concluded that AI cannot work across that seam well enough. So they eliminated it.

Analysis from SalesforceDevops.net frames it sharply: this is a platform sovereignty play disguised as a contact center product. The CCaaS capability is the vehicle; data gravity is the destination. Every voice call that flows through Agentforce Contact Center becomes structured data that trains Salesforce’s AI agents, informs supervisors, and feeds the CRM. Established CCaaS vendors — Genesys, NICE, Five9, Amazon Connect — now face a formidable competitor embedded inside the platform their own customers already use daily.

The implications ripple outward. CX Today notes that this is a pivot from “AI in the contact center” to “AI as the contact center” — where automation is the first line of service and human agents handle exceptions and escalations. Futurum Research observes that 80% of CIOs cite data security and privacy as leading AI adoption barriers — meaning the governance, auditability, and transparency of AI decisions in the contact center have never been more consequential.

Why This Makes AutoQA the Most Strategically Relevant Feature in the Stack

Here’s the tension that the Salesforce entry exposes across the entire CCaaS market: every vendor is now racing to build agentic AI capabilities, to unify channels, to eliminate the integration tax. But nobody can be confident their AI agents are actually performing well unless they have deep, defensible quality assurance built in.

When AI agents handle the first line of service — autonomously resolving customer issues at scale — QA stops being a compliance function and becomes an operational control surface. If an AI agent fails to acknowledge a frustrated customer, hallucinate a resolution, or miss an escalation cue, you need to know about it before it becomes a churn event, a regulatory issue, or a viral moment on social media. You cannot review 100% of AI-handled interactions manually. Automated QA at the depth and precision AutoQA provides isn’t a nice-to-have anymore — it’s the only viable oversight mechanism.

— SQM Group, 2026 Contact Center Best Practices

What Every CCaaS Vendor Now Needs to Answer

The CCaaS market has over 200 competitors, and while vendor messaging increasingly sounds similar, the functional depth of QA capabilities is one of the sharpest real differentiators. Consider what the market leaders are doing:

- NICE CXone — built its QA and workforce engagement toolset from its legacy as a workforce optimization (WFO) provider; its Enlighten AI powers auto-QA scoring and sentiment analysis natively within the platform.

- Genesys Cloud CX — offers a Virtual Supervisor for AI-powered evaluation scoring and native WEM including scheduling, forecasting, quality management, and gamification — became the first CCaaS vendor to pass $2B in annual recurring revenue in 2025.

- Microsoft Dynamics 365 Contact Center — actively building out a Workforce Engagement Management suite, with QA pieces assembling toward an agentic auto-scoring capability.

- Zendesk Contact Center — acquired Local Measure to enter CCaaS, and bundles WFM, QA, and AI agents within the same platform environment, attractive for its 110,000+ existing CRM customers.

But there’s a cautionary tale embedded in this arms race. CX Today reports that a major supermarket chain recently purchased a CCaaS platform in a seven-figure deal — and then discovered its bundled QA solution met only 15% of the QA team’s actual requirements. The procurement team had checked the “QA” box without interrogating what was underneath. As agentic AI handles more customer interactions end-to-end, that gap between checkbox QA and precision QA becomes catastrophically expensive.

This is exactly the gap SupportLogic’s AutoQA is designed to fill. Where CCaaS-bundled QA tools offer broad coverage within a single vendor ecosystem, AutoQA’s multi-model stack — the precise behavioral scoring, the acoustic tone detection, the generative coaching layer — operates at a depth of signal that generic platform QA cannot replicate. The technical architecture described throughout this post isn’t just an engineering achievement; it’s the product’s moat.

The QA Imperative Is Not Optional for Agentforce — or Anyone

Salesforce’s Agentforce Contact Center currently has no deeply native, precision QA capability at the depth that established WEM vendors or specialist platforms like SupportLogic provide. NoJitter’s analysis notes that Salesforce still trails leaders like NICE and Verint in workforce management and compliance analytics — precisely the domains that QA lives in. The trade-off for Agentforce’s native unification is reduced functional depth in these areas.

For any CCaaS vendor — Salesforce included — that wants to win enterprise accounts where AI agents are being trusted with customer-critical interactions, QA isn’t a downstream feature request. It’s the infrastructure of trust. You cannot credibly sell autonomous AI agents to an enterprise buyer without also answering: “How will you know when your AI agents are underperforming, and how fast will you know it?”

The answer to that question is AutoQA. Not a checkbox. Not a bundled scorecard. A precision, multi-model, behavior-specific measurement system that can detect anger in an agent’s voice, score 13 distinct interaction behaviors without LLM arithmetic, and generate coaching guidance grounded in actual observed trends — at the scale and cost that enterprise contact centers demand.

As the CCaaS market consolidates around a handful of platform giants, the vendors who survive won’t just be the ones who unified the channels. They’ll be the ones who can prove, at the interaction level, that their AI is doing its job well. That proof requires exactly the kind of engineering this post has described.

Conclusion

SupportLogic’s AutoQA is a reminder that the right ML system for a complex enterprise problem looks less like a single powerful model and more like a well-orchestrated ensemble of purpose-built components. RoBERTa for discriminative text classification. SpaCy for interpretable rule-based detection. Python for anything that’s actually arithmetic. A dedicated GPU endpoint on Vertex AI for audio emotion. And — after all of that — an LLM for the one thing LLMs are genuinely exceptional at: making sense of the output in plain language.

The wrapper would have been faster to build and dramatically less reliable in production. The precision ML stack took longer to design, evaluate, and harden — and that investment is exactly what makes AutoQA’s scores defensible in a performance review conversation. That defensibility is the product.

And now, with Salesforce entering CCaaS and every major platform doubling down on agentic AI, the stakes around that defensibility have sharply increased. When AI agents resolve customer issues at scale with no human in the loop, QA is no longer a reporting function — it’s the only mechanism of control. The CCaaS vendors who win the next decade won’t just be the ones who unified voice, channels, and CRM. They’ll be the ones who can prove, at the level of individual behaviors and individual interactions, that their AI is doing its job well. That proof requires exactly the kind of precision engineering this post has described.

Don’t miss out

Want the latest B2B Support, AI and ML blogs delivered straight to your inbox?