If you’ve ever watched a sentiment analysis demo at a vendor booth, it probably went something like this: someone pastes “I love this product!” into a box, a green smiley face appears, and everyone nods. Then “This is terrible.” returns a red frown, and the deal is practically closed. Now try the same demo with a real enterprise support ticket.

The 15-minute sentiment demo that doesn’t survive contact with real support data

Try running this actual line from a real enterprise support ticket through a consumer-grade sentiment classifier:

An off-the-shelf sentiment classifier will stare at this sentence, notice the word “fine,” spot no profanity, and confidently return neutral — or worse, positive. A seasoned support engineer reads the same sentence and immediately recognizes a customer who is technically constrained, politely frustrated, and one unsatisfactory answer away from escalating to their VP.

That gap — between what a sentiment model says is happening and what is actually happening inside a B2B support conversation — is the problem this post is about. It’s also the reason SupportLogic built its platform around deep sentiment analysis rather than the polarity-scoring approach that dominates the rest of the market.

What “simple” sentiment analysis actually does

Before we dismantle it, let’s be precise about what most sentiment tools are doing under the hood. The simplest implementations — the ones bundled into CRMs, survey platforms, and social listening dashboards — generally fall into one of three buckets:

- Lexicon-based scoring. A dictionary (VADER, AFINN, SentiWordNet) assigns a polarity value to each word, the scores are summed, and you get a number between −1 and +1. This is the approach documented in Stanford’s classic sentiment analysis overview and still powers a surprising amount of production software.

- Binary or ternary classifiers. A supervised model (often logistic regression, SVM, or a fine-tuned BERT) trained on movie reviews (IMDB), tweets (Sentiment140), or product reviews (Amazon), outputting one of {positive, negative, neutral}.

- General-purpose LLM prompts. “Classify the sentiment of this message” sent to a general model with no support-domain grounding.

Each of these assumes a stable mapping: word or phrase → emotion. That assumption holds up reasonably well for movie reviews, restaurant ratings, and tweets about airlines. It collapses almost immediately in enterprise support.

Why B2B support communication breaks sentiment models

Support tickets aren’t product reviews. They are long, technical, multi-party, contextual exchanges between people who are paid to be professional and whose livelihoods depend on problems getting fixed. Here’s what actually happens in that text — and why each trait is a landmine for off-the-shelf models.

01Technical jargon inverts the polarity of common words

In everyday English, “failure,” “kill,” “abort,” “crash,” “dump,” and “terminate” are strongly negative. In a support ticket, they are often neutral, descriptive, or even good news:

A lexicon-based model sees kill, zombie, crash, and dump and returns something close to −0.8. The customer, in reality, is relieved. The opposite pattern also happens constantly: “The build is green” is a positive technical statement that mentions a color, which most general-purpose models treat as neutral.

This isn’t an edge case — it’s the default mode of communication in technical domains. Research on domain-specific NLP has consistently found that models trained on general corpora perform poorly on specialized vocabularies where the same tokens carry inverted or entirely different meaning. A defense/intelligence NLP writeup makes the same point bluntly: terms like “SIGINT” or “CONOP” carry precise meanings that general-purpose language models may misinterpret or ignore. The same is true of kubelet, OOMKilled, retention policy, blast radius, and thousands of other terms that pepper enterprise support.

02The “polite enterprise” register hides urgency

B2B customers are professionals writing on behalf of their employer. They don’t write “THIS SUCKS” even when their production cluster is on fire. They write:

“We’d appreciate any update at your earliest convenience. Our leadership is asking for a status by EOD.”

Every word in that sentence is polite. A polarity-based model will score it neutral-to-mildly-positive. Any experienced support manager reads it as a five-alarm fire: “leadership,” “asking,” and “EOD” together mean the customer is already escalating internally and is about to escalate externally.

A blog we published a while back, Tell Me How You Really Feel, makes this point at the strategic level — enterprise customers say things they don’t literally mean all the time, and a model that reads only the surface will miss the case until it’s already escalated. Deep sentiment analysis has to distinguish tone (polite) from intent (urgent / frustrated / at-risk).

03Sarcasm, understatement, and negation are the norm

The academic literature on sentiment analysis has been calling out sarcasm and negation as unsolved problems for over a decade (see Sentiment analysis and the complex natural language). Support tickets double down on both:

- Sarcasm: “Great, another kernel panic. Exactly what I wanted on a Friday.”

- Understatement: “This is a bit of a problem for our production environment.” (translation: we are down)

- Complex negation: “I wouldn’t say the patch didn’t help, but we’re not seeing the improvement we were promised.”

That third example contains a double negative, a concession, and an implicit accusation. A bag-of-words model scores it based on “help” (positive), “promised” (positive), and comes back with a smiley face. The customer is, in fact, quietly furious.

04Mixed sentiment per ticket, per paragraph, per sentence

A single support ticket frequently contains:

- Positive sentiment toward one aspect (“your docs are excellent”)

- Negative sentiment toward another (“but the CLI is unusable”)

- Neutral factual content (“we’re running version 4.2.1 on RHEL 9”)

- Implicit urgency (“our customer-facing deploy is blocked”)

A single polarity score averages these into mush. You end up with a “neutral” ticket that is simultaneously a testimonial, a bug report, an escalation risk, and a product feedback signal. This is exactly the problem that aspect-based sentiment analysis (ABSA) was designed to solve — decomposing text so that each aspect (product, agent, documentation, SLA) gets its own sentiment score. A 2024 state-of-the-art review of NLP sentiment analysis identifies ABSA as one of the few techniques that meaningfully moves the needle on complex, multi-topic text.

05The conversation has memory; a single message does not

Support is a thread, not a message. The sentiment of the customer’s sixth reply depends heavily on what happened in replies one through five. If the agent has already missed two commitments, a technically polite “Any update?” on reply six is a very different signal than the same text on reply one. Most sentiment tools score messages in isolation, throwing away the conversational context that carries most of the information.

06Multilingual and code-mixed input

Enterprise support teams serve global customers. Tickets arrive in English, German, Japanese, Portuguese, Hindi, and often in two languages at once (“Hola, here is the stack trace:”). Markov ML’s writeup on sentiment analysis challenges notes that machine translation routinely strips emotional nuance before it ever reaches the classifier. By the time a translated ticket hits a sentiment model, the signal is half-gone.

07Structured content mixed with prose

A typical support message looks like this:

A naïve sentiment pipeline will either (a) tokenize the stack trace and light up on “FATAL,” “ERROR,” and “refused,” dragging the score wildly negative for the wrong reason, or (b) try to filter logs and accidentally discard informative signal. Correctly separating log content from human content — and then reading each appropriately — is a non-trivial preprocessing problem that consumer sentiment tools do not solve.

What “deep” sentiment analysis actually means

When we talk about deep sentiment analysis at SupportLogic, we’re not using “deep” as a marketing synonym for “good.” We mean something specific: a stack of NLP techniques layered on top of a domain-tuned model that’s been trained on millions of real enterprise support interactions. Our Sentiment Agent page describes the three-layer approach; here’s the technical picture underneath it.

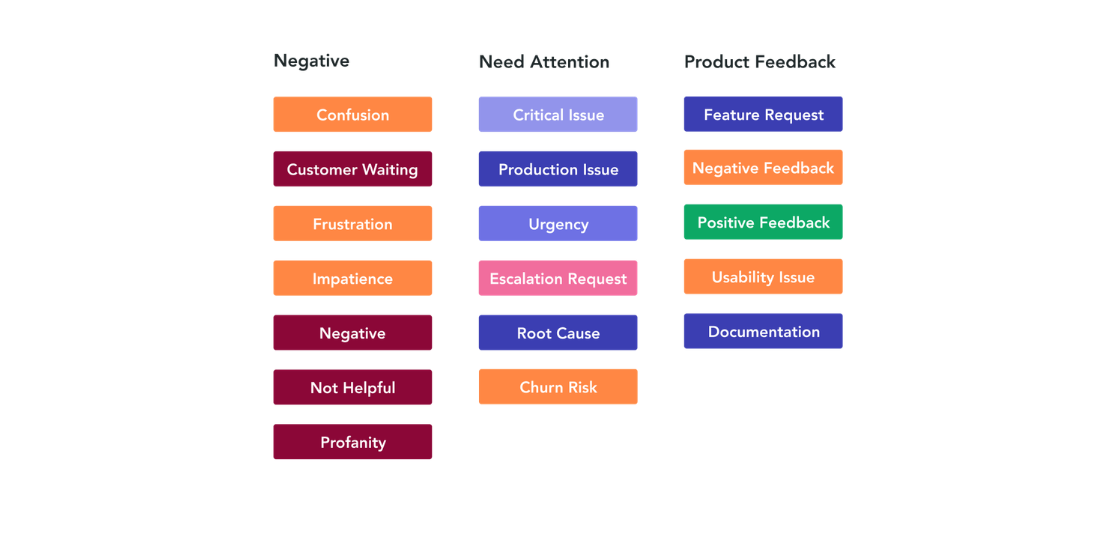

Signal extraction beyond sentiment

Sentiment is necessary but not sufficient. SupportLogic’s platform extracts 40+ distinct signals from support conversations — things like customer impact, churn risk, confusion, urgency, commitment made, deadline mentioned, and executive involvement — many of which are only loosely correlated with raw sentiment. Our Escalation Agent combines these signals with case history, responsiveness, and account data to predict escalations before the customer formally escalates. (For a deeper look at how these signals feed the prediction model, our tech guide on SupportLogic AI and ML walks through the architecture.)

Conversation-level context

Every signal is scored in the context of the full thread, the account history, and the customer’s baseline tone. A message that looks neutral in isolation can be flagged as high-risk if the customer’s last five messages have trended increasingly terse, if commitments have been missed, or if the conversation has already been reassigned twice. This is the “memory” that single-message classifiers lack.

Domain-tuned language models

The models powering all of this are trained on the language of B2B post-sales support — not movie reviews, not tweets, not Amazon product feedback. As our platform page puts it, the NLP is built with the language of B2B in mind. That’s not a slogan; it’s the reason the same sentence (“We ran kill −9 and it finally cleared”) gets correctly scored as relief rather than rage.

What you can actually do with it

The point of all this engineering isn’t to produce prettier dashboards. It’s to change what support teams can do. Customers running SupportLogic in production are reporting results like these:

- Predict escalations before they happen. 8×8 reached 90% accuracy on escalation prediction across ~20,000 monthly cases by acting on extracted signals rather than waiting for explicit escalation requests.

- Reduce escalation rates. Qlik reduced escalations by 30% in six months using sentiment-driven prioritization, as documented in our customer sentiment analysis overview.

- Improve SLA performance. Databricks reduced SLA misses by 40% and improved CSAT by feeding sentiment signals into case assignment and escalation workflows.

- Coach agents on the interactions that matter. The Coaching Agent surfaces the specific phrases and moments in a conversation that drove sentiment up or down, so QA reviews are grounded in evidence rather than sampling bias.

- Replace biased surveys with real voice-of-the-customer. Traditional NPS and CSAT surveys suffer from low response rates and self-selection bias. Analyzing 100% of support interactions — rather than the 5–10% of customers who respond to surveys — yields a dramatically less distorted picture.

A checklist for evaluating sentiment analysis in B2B support

If you’re evaluating a sentiment analysis solution for an enterprise support team, here are the questions that separate the demos from the production-ready systems:

- Is the model domain-tuned for B2B support, or trained on general-purpose text? Ask for the training corpus.

- Does it produce aspect-level sentiment, or just a single score per message? If it’s one score, mixed-sentiment tickets will be invisible to you.

- Does it score in context, or message-by-message? Threaded conversations need threaded analysis.

- How does it handle technical jargon, logs, and code blocks? Paste in a real stack trace and see what happens.

- Does it extract structured signals beyond sentiment (urgency, churn risk, customer impact, commitment tracking)? Polarity alone is not actionable.

- How does it handle sarcasm, understatement, and polite-but-urgent language? Try the “earliest convenience” test.

- Is there a feedback loop? Support vocabulary evolves constantly. A model that can’t learn from agent disagreements will decay.

- How does it handle multilingual input and code-mixing? If your customers are global, this is non-negotiable.

- Does it integrate with your ticketing system in real time, or does it run as an after-the-fact analytics job? Proactive support requires streaming signals.

The bottom line

Sentiment analysis got a reputation as a “solved” problem because the demo is easy and the off-the-shelf libraries are plentiful. It is not solved for enterprise support. The combination of technical jargon, polite registers, mixed sentiment, threaded context, and multilingual input makes consumer-grade sentiment tools not just inaccurate but actively misleading — they’ll tell you a customer is happy right up until they’re not your customer anymore.

Deep sentiment analysis — fine-grained polarity, emotion detection, aspect-based decomposition, domain-tuned models, and signal extraction running in real time against conversation-level context — is what it actually takes to hear what a B2B customer is saying, including the parts they aren’t saying out loud.

See deep sentiment analysis on your own support data

Go beyond biased surveys and shallow polarity scores. Explore SupportLogic at your own pace, or talk to us live.

Further Reading

- SupportLogic: Sentiment Agent

- SupportLogic: Customer Sentiment Analysis overview

- SupportLogic:Platform

- SupportLogic: AI & Machine Learning technical guide

- SupportLogic: Customer Escalation Prediction Model

- Stanford NLP: Sentiment Analysis project

- ScienceDirect: Recent advancements and challenges of NLP-based sentiment analysis

- Springer: Sentiment analysis and the complex natural language